Introduction

Sharing bandwidth among cameras often results in reduced frame rate and incomplete images due to packet collisions. This application note discusses interleaving of packets to address collision of packets and describes various methods for synchronization of cameras.

Synchronization of cameras is important to make sure that timing of interleaving of packets doesn’t drift over time. Two methods of synchronization are discussed:

1. PTPSync (built-in firmware)

2. External hardware trigger

For the purposes of this application note, 4 IMX265 cameras were connected over a 1Gbps network via a switch. However, the methods outlined in this app note applies to all LUCID cameras.

Prerequisites

- Arena SDK 1.0.31.8 or newer

- Firmware version with PTPSync support

- Microsoft Visual Studio 2015

Equipment Used

- 4 x PHX032S-C cameras

- Netgear MS510TXPP switch

- Intel I350 Gigabit 1000BaseT Network Ethernet Adapter

- PC with specifications

- ASUS PRIME Z370-A

- Intel Core i7-8700 3.2Ghz

- 16 GB DDR4 RAM

Delay Calculator

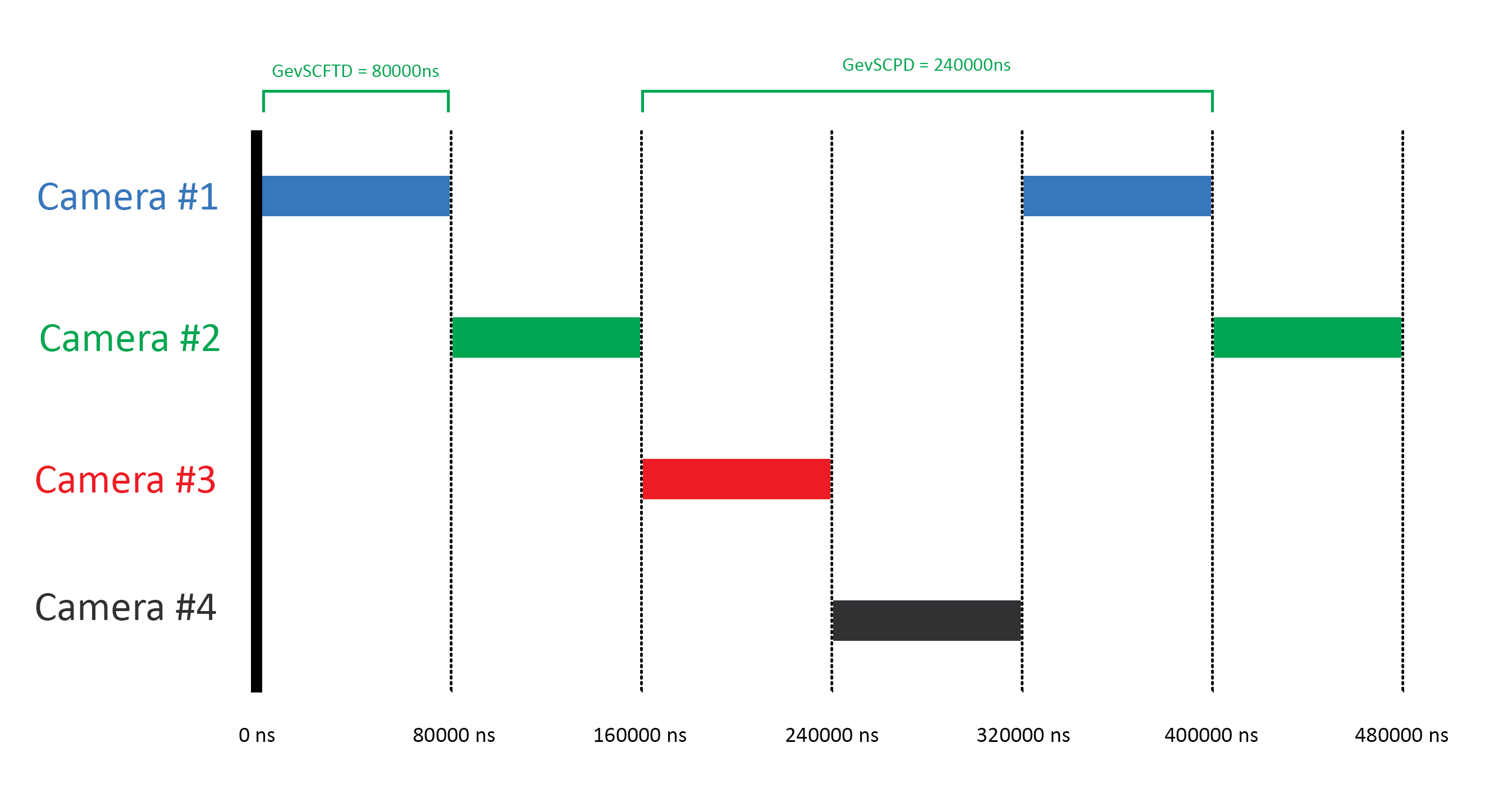

In order to interleave the packets, we will use two type of delays:

- Stream Channel Packet Delay (GevSCPD) – This node controls the delay (in GEV timestamp counter unit) to insert between each packet for the stream channel.

- Stream Channel Frame Transmission Delay (GevSCFTD) – This node controls the transmission delay before transmission occurs on the camera side i.e. the wait time between when the camera is ready to transmit an image and when an image is actually transmitted.

The formula to calculate these delays is as follow:

Delay Calculator Example

Here is an example using 4 cameras:

Device Link Speed = 1 Gbps = 125000000 Bps

Time to transfer 1 byte = 1/125000000 Bps = 0.000000008 seconds or 8ns

Packet Size = 9014 Bytes

Delay for one packet = 9014 Bytes * 8 ns = 72112 ns

Buffer = 10.93%

Packet Delay for 1 camera = 72112 ns + (10.93% of 72112 ns) = 80000 ns

Transmission Delay for 4 cameras = 3 x 80000 ns = 240000 ns

- Camera 1

- Packet Delay (GevSCPD) = 240000ns

- Transmission Delay (GevSCFTD) = 0ns

- Camera 2

- Packet Delay (GevSCPD) = 240000ns

- Transmission Delay (GevSCFTD) = 80000ns

- Camera 3

- Packet Delay (GevSCPD) = 240000ns

- Transmission Delay (GevSCFTD) = 160000ns

- Camera 4

- Packet Delay (GevSCPD) = 240000ns

- Transmission Delay (GevSCFTD) = 240000ns

Figure 1: This diagram shows the packet delay (GevSCPD) and transmission delay (GevSCFTD) for camera #2 in a 4xIMX265 system

Synchronization using PTPSync

First of all, what is the Precision Time Protocol (PTP)?

Precision Time Protocol (PTP or IEEE1588) is an IEEE standard that has been integrated into GigE Vision 2.0. It is a method of synchronizing the clocks of multiple devices on an Ethernet network. This is achieved by setting one device as the master clock and have all other devices synchronize and adjust to the master clock periodically. The synchronization is handled automatically by the devices once the master and slave devices have been set.

PTP can be used with LUCID cameras in several ways.

PTPSync is a built-in firmware feature that schedules 4 advance action commands in the firmware itself as opposed to scheduling using the SDK. Handling of action commands scheduling within the firmware eliminates overhead in the application code and helps in achieving higher frame rate.

This section describes the steps to enable PTP and setup PTPSync. Complete example code that uses PTPSync can be found at the bottom of this page.

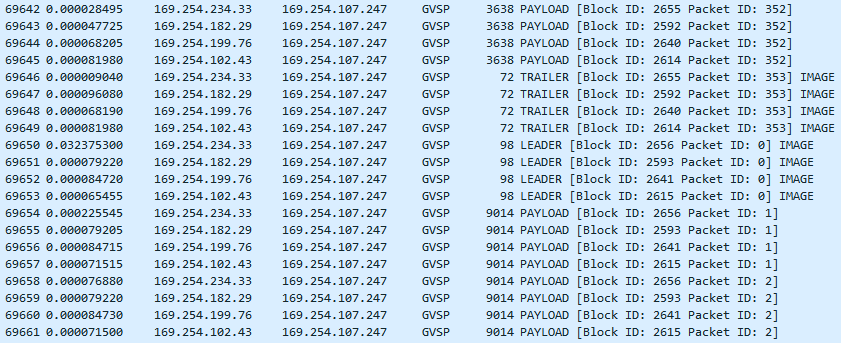

Figure 2: Wireshark logs show the interleaving of packets achieved from using PTPSync

Step 1: Enable PTP

Arena::SetNodeValue<bool>(pDevice->GetNodeMap(), "PtpEnable", true);

Step 2: Confirm PTP Status

Make sure that the PTPStatus node has been set to either “Master” or “Slave”.

GenICam::gcstring ptpStatus = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "PtpStatus");

Step 3: Turn on ‘PTPSync’ mode

Set acquisition start mode to “PTPSync”.

std::cout << TAB3 << "Set acquisition start mode to 'PTPSync'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "AcquisitionStartMode", "PTPSync")

Step 4: Maximize AcquisitionFrameRate

When PTPSync mode is turned on, AcquisitionFrameRate node won’t be controlling the actual frame rate but it might cap the max achievable frame rate. There is a separate node called PTPSyncFrameRate for changing frame rate when PTPSync mode is turned on.

In order to avoid capping of the frame rate, we will set AcquisitionFrameRate to it’s maximum value.

GenApi::CFloatPtr pAcquisitionFrameRate = pDevice->GetNodeMap()->GetNode("AcquisitionFrameRate");pAcquisitionFrameRate->SetValue(pAcquisitionFrameRate->GetMax());

Step 5: Set PTPSyncFrameRate

Value for PTPSyncFrameRate should always be less than the value set in AcquisitionFrameRate node.

GenApi::CFloatPtr pPTPSyncFrameRate = pDevice->GetNodeMap()->GetNode("PTPSyncFrameRate");pPTPSyncFrameRate->SetValue(PTPSYNC_FRAME_RATE);

Step 6: Set the desired StreamChannelPacketDelay and StreamChannelFrameTransmissionDelay for each camera

This code can be used for setting Packet Delay and Transmission Delay calculated using the above formula.

GenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);

GenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(80000);

Synchronization using External Hardware Trigger

If you are using an external source to trigger the cameras, you can apply the same settings for packet delay and transmission delay that you calculated using the above the formula.

Pay attention to the following settings so that packets are properly interleaved:

- Only use “RisingEdge”, “FallingEdge” or “AnyEdge” for the TriggerActivation node. Using “LevelHigh” or “LevelLow” will not work to interleave the packets.

- Make sure the frequency of the trigger source takes into account the drop in frame rate because of packet delay and transmission delay.

Step 1: Enable PTP (Optional)

Arena::SetNodeValue<bool>(pDevice->GetNodeMap(), "PtpEnable", true);

Step 2: Setup Trigger Mode

In this example we will be using Line0 as the input line. In case you are using a different line, please make sure it is properly configured under “Digital IO Control”.

Only use “RisingEdge”, “FallingEdge” or “AnyEdge” for the TriggerActivation node.

Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerSelector","FrameStart");Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerSource", "Line0");Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerActivation", "RisingEdge");

Step 3: Enable Trigger Mode

Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerMode", "On");

Step 4: Set the desired StreamChannelPacketDelay and StreamChannelFrameTransmissionDelay for each camera

GenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);

GenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(80000);

PTPSync Example Code

#include "stdafx.h"#include "ArenaApi.h"#include <algorithm> // for std::find#include <thread> // for sleep#define TAB1 " "#define TAB2 " "#define TAB3 " "#define ERASE_LINE " "#define EXPOSURE_TIME 10000.0#define PTPSYNC_FRAME_RATE 7.0// =-=-=-=-=-=-=-=-=-// =-=- SETTINGS =-=-// =-=-=-=-=-=-=-=-=-// Image timeout// Timeout for grabbing images (in milliseconds). If no image is available at// the end of the timeout, an exception is thrown. The timeout is the maximum// time to wait for an image; however, getting an image will return as soon as// an image is available, not waiting the full extent of the timeout.#define TIMEOUT 20000// number of images to grab#define NUM_IMAGES 25000// =-=-=-=-=-=-=-=-=-// =-=- EXAMPLE -=-=-// =-=-=-=-=-=-=-=-=-//void PTPSyncCamerasAndAcquireImages(Arena::ISystem* pSystem, std::vector<Arena::IDevice*>& devices){for (size_t i = 0; i < devices.size(); i++){Arena::IDevice* pDevice = devices.at(i);GenICam::gcstring deviceSerialNumber = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "DeviceSerialNumber");std::cout << TAB2 << "Prepare camera " << deviceSerialNumber << "\n";// Manually set exposure time// In order to get synchronized images, the exposure time must be// synchronized as well.std::cout << TAB3 << "Exposure: ";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(),"ExposureAuto","Off");Arena::SetNodeValue<double>(pDevice->GetNodeMap(),"ExposureTime",EXPOSURE_TIME);std::cout << Arena::GetNodeValue<double>(pDevice->GetNodeMap(), "ExposureTime") << "\n";// Synchronize devices by enabling PTP// Enabling PTP on multiple devices causes them to negotiate amongst// themselves so that there is a single master device while all the// rest become slaves. The slaves' clocks all synchronize to the// master's clock.std::cout << TAB3 << "PTP: ";Arena::SetNodeValue<bool>(pDevice->GetNodeMap(),"PtpEnable",true);std::cout << (Arena::GetNodeValue<bool>(pDevice->GetNodeMap(), "PtpEnable") ? "enabled" : "disabled") << "\n";// Use max supported packet size. We use transfer control to ensure that only one camera// is transmitting at a time.std::cout << TAB3 << "StreamAutoNegotiatePacketSize: ";Arena::SetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamAutoNegotiatePacketSize", true);std::cout << Arena::GetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamAutoNegotiatePacketSize") << "\n";// enable stream packet resendstd::cout << TAB3 << "StreamPacketResendEnable: ";Arena::SetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamPacketResendEnable", true);std::cout << Arena::GetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamPacketResendEnable") << "\n";// Set acquisition mode to 'Continuous'std::cout << TAB3 << "Set acquisition mode to 'Continuous'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "AcquisitionMode", "Continuous");//Set acquisition start mode to 'PTPSync'std::cout << TAB3 << "Set acquisition start mode to 'PTPSync'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "AcquisitionStartMode", "PTPSync");// Set StreamBufferHandlingMode to 'NewestOnly'std::cout << TAB3 << "Set StreamBufferHandlingMode to 'NewestOnly'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetTLStreamNodeMap(), "StreamBufferHandlingMode", "NewestOnly");// Set pixel format to Mono8Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "PixelFormat", "Mono8");std::cout << TAB3 << "Set pixel format to 'Mono8' \n";if (i == 0){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(0);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 1){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(80000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 2){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(160000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 3){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(240000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}// Frame rateGenApi::CFloatPtr pAcquisitionFrameRate = pDevice->GetNodeMap()->GetNode("AcquisitionFrameRate");pAcquisitionFrameRate->SetValue(pAcquisitionFrameRate->GetMax());// PTPSyncFrameRateGenApi::CFloatPtr pPTPSyncFrameRate = pDevice->GetNodeMap()->GetNode("PTPSyncFrameRate");pPTPSyncFrameRate->SetValue(PTPSYNC_FRAME_RATE);}// prepare systemstd::cout << TAB2 << "Prepare system\n";// Wait for devices to negotiate their PTP relationship// Before starting any PTP-dependent actions, it is important to wait for// the devices to complete their negotiation; otherwise, the devices may// not yet be synced. Depending on the initial PTP state of each camera,// it can take about 40 seconds for all devices to autonegotiate. Below,// we wait for the PTP status of each device until there is only one// 'Master' and the rest are all 'Slaves'. During the negotiation phase,// multiple devices may initially come up as Master so we will wait until// the ptp negotiation completes.std::cout << TAB1 << "Wait for devices to negotiate. This can take up to about 40s.\n";std::vector<GenICam::gcstring> serials;int i = 0;do{bool masterFound = false;bool restartSyncCheck = false;// check devicesfor (size_t j = 0; j < devices.size(); j++){Arena::IDevice* pDevice = devices.at(j);// get PTP statusGenICam::gcstring ptpStatus = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "PtpStatus");if (ptpStatus == "Master"){if (masterFound){// Multiple masters -- ptp negotiation is not completerestartSyncCheck = true;break;}masterFound = true;}else if (ptpStatus != "Slave"){// Uncalibrated state -- ptp negotiation is not completerestartSyncCheck = true;break;}}// A single master was found and all remaining cameras are slavesif (!restartSyncCheck && masterFound)break;std::this_thread::sleep_for(std::chrono::duration<int>(1));// for outputif (i % 10 == 0)std::cout << "\r" << ERASE_LINE << "\r" << TAB2 << std::flush;std::cout << "." << std::flush;i++;} while (true);// start streamstd::cout << "\n"<< TAB1 << "Start stream\n";for (size_t i = 0; i < devices.size(); i++){devices.at(i)->StartStream();}// get images and check timestampsstd::cout << TAB1 << "Get images\n";for (size_t i = 0; i < NUM_IMAGES; i++){for (size_t j = 0; j < devices.size(); j++){Arena::IDevice* pDevice = devices.at(j);GenICam::gcstring deviceSerialNumber = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "DeviceSerialNumber");std::cout << TAB2 << "Image " << i << " from device " << deviceSerialNumber << "\n";// Compare timestamps// Scheduling action commands amongst PTP synchronized devices results// in synchronized images with synchronized timestamps.std::cout << TAB3 << "Timestamp: ";Arena::IImage* pImage = pDevice->GetImage(3000);std::cout << pImage->GetTimestamp() << "\n";// requeue bufferpDevice->RequeueBuffer(pImage);}}// stop streamstd::cout << TAB1 << "Stop stream\n";for (size_t i = 0; i < devices.size(); i++){devices.at(i)->StopStream();}}// =-=-=-=-=-=-=-=-=-// =- PREPARATION -=-// =- & CLEAN UP =-=-// =-=-=-=-=-=-=-=-=-int main(){// flag to track when an exception has been thrownbool exceptionThrown = false;std::cout << "Cpp_PTPSync\n";try{// prepare exampleArena::ISystem* pSystem = Arena::OpenSystem();pSystem->UpdateDevices(100);std::vector<Arena::DeviceInfo> deviceInfos = pSystem->GetDevices();if (deviceInfos.size() < 2){if (deviceInfos.size() == 0)std::cout << "\nNo camera connected. Example requires at least 2 devices\n";else if (deviceInfos.size() == 1)std::cout << "\nOnly one device connected. Example requires at least 2 devices\n";std::cout << "Press enter to complete\n";// clear inputwhile (std::cin.get() != '\n')continue;std::getchar();return 0;}std::vector<Arena::IDevice*> devices;for (size_t i = 0; i < deviceInfos.size(); i++){devices.push_back(pSystem->CreateDevice(deviceInfos.at(i)));}// run examplestd::cout << "Commence example\n\n";PTPSyncCamerasAndAcquireImages(pSystem, devices);std::cout << "\nExample complete\n";// clean up examplefor (size_t i = 0; i < devices.size(); i++){pSystem->DestroyDevice(devices.at(i));}Arena::CloseSystem(pSystem);}catch (GenICam::GenericException& ge){std::cout << "\nGenICam exception thrown: " << ge.what() << "\n";exceptionThrown = true;}catch (std::exception& ex){std::cout << "\nStandard exception thrown: " << ex.what() << "\n";exceptionThrown = true;}catch (...){std::cout << "\nUnexpected exception thrown\n";exceptionThrown = true;}std::cout << "Press enter to complete\n";std::getchar();if (exceptionThrown)return -1;elsereturn 0;}

External Hardware Trigger Example Code

#include "stdafx.h"#include "GenTL.h"#include "ArenaApi.h"#include <algorithm> // for std::find#include <thread> // for sleep#ifdef __linux__#pragma GCC diagnostic push#pragma GCC diagnostic ignored "-Wunused-but-set-variable"#endif#include "GenICam.h"#ifdef __linux__#pragma GCC diagnostic pop#endif#include "ArenaApi.h"#define TAB1 " "#define TAB2 " "#define TAB3 " "#define ERASE_LINE " "#define EXPOSURE_TIME 10000.0// number of images to grab#define NUM_IMAGES 500// =-=-=-=-=-=-=-=-=-// =-=- SETTINGS =-=-// =-=-=-=-=-=-=-=-=-// image timeout#define TIMEOUT 20000// =-=-=-=-=-=-=-=-=-// =-=- EXAMPLE -=-=-// =-=-=-=-=-=-=-=-=-// trigger configuration and usevoid ConfigureTriggerAndAcquireImage(Arena::ISystem* pSystem, std::vector<Arena::IDevice*>& devices){for (size_t i = 0; i < devices.size(); i++){Arena::IDevice* pDevice = devices.at(i);GenICam::gcstring deviceSerialNumber = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "DeviceSerialNumber");std::cout << TAB2 << "DeviceSerialNumber " << i << " from device " << deviceSerialNumber << "\n";// get node values that will be changed in order to return their values at// the end of the exampleGenICam::gcstring triggerSelectorInitial = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerSelector");GenICam::gcstring triggerModeInitial = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerMode");GenICam::gcstring triggerSourceInitial = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerSource");// Set trigger selector// Set the trigger selector to FrameStart. When triggered, the device will// start acquiring a single frame. This can also be set to// AcquisitionStart or FrameBurstStart.std::cout << TAB1 << "Set trigger selector to FrameStart\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(),"TriggerSelector","FrameStart");// Set trigger mode// Enable trigger mode before setting the source and selector and before// starting the stream. Trigger mode cannot be turned on and off while the// device is streaming.std::cout << TAB1 << "Enable trigger mode\n";// Set trigger source// Set the trigger source to software in order to trigger images without// the use of any additional hardware. Lines of the GPIO can also be used// to trigger.std::cout << TAB1 << "Set trigger source to Line0\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerSource", "Line0");Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerActivation", "RisingEdge");/*Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(),"TriggerOverlap","PreviousFrame");*/Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "TriggerMode", "On");// Manually set exposure time// In order to get synchronized images, the exposure time must be// synchronized as well.std::cout << TAB3 << "Exposure: ";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "ExposureAuto", "Off");Arena::SetNodeValue<double>(pDevice->GetNodeMap(), "ExposureTime", EXPOSURE_TIME);std::cout << Arena::GetNodeValue<double>(pDevice->GetNodeMap(), "ExposureTime") << "\n";// Synchronize devices by enabling PTP// Enabling PTP on multiple devices causes them to negotiate amongst// themselves so that there is a single master device while all the// rest become slaves. The slaves' clocks all synchronize to the// master's clock.std::cout << TAB3 << "PTP: ";Arena::SetNodeValue<bool>(pDevice->GetNodeMap(), "PtpEnable", true);std::cout << (Arena::GetNodeValue<bool>(pDevice->GetNodeMap(), "PtpEnable") ? "enabled" : "disabled") << "\n";// Use max supported packet size. We use transfer control to ensure that only one camera// is transmitting at a time.std::cout << TAB3 << "StreamAutoNegotiatePacketSize: ";Arena::SetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamAutoNegotiatePacketSize", true);std::cout << Arena::GetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamAutoNegotiatePacketSize") << "\n";// enable stream packet resendstd::cout << TAB3 << "StreamPacketResendEnable: ";Arena::SetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamPacketResendEnable", true);std::cout << Arena::GetNodeValue<bool>(pDevice->GetTLStreamNodeMap(), "StreamPacketResendEnable") << "\n";// Set acquisition mode to 'Continuous'std::cout << TAB3 << "Set acquisition mode to 'Continuous'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "AcquisitionMode", "Continuous");// Set StreamBufferHandlingMode to 'NewestOnly'std::cout << TAB3 << "Set StreamBufferHandlingMode to 'NewestOnly'\n";Arena::SetNodeValue<GenICam::gcstring>(pDevice->GetTLStreamNodeMap(), "StreamBufferHandlingMode", "NewestOnly");if (i == 0){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(0);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 1){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(80000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 2){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(160000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}else if (i == 3){// Packet DelayGenApi::CIntegerPtr pStreamChannelPacketDelay = pDevice->GetNodeMap()->GetNode("GevSCPD");pStreamChannelPacketDelay->SetValue(240000);std::cout << TAB3 << "GevSCPD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCPD") << "\n";// Transmission DelayGenApi::CIntegerPtr pStreamChannelFrameTransmissionDelay = pDevice->GetNodeMap()->GetNode("GevSCFTD");pStreamChannelFrameTransmissionDelay->SetValue(240000);std::cout << TAB3 << "GevSCFTD: ";std::cout << Arena::GetNodeValue<int64_t>(pDevice->GetNodeMap(), "GevSCFTD") << "\n";}}// prepare systemstd::cout << TAB2 << "Prepare system\n";// Wait for devices to negotiate their PTP relationship// Before starting any PTP-dependent actions, it is important to wait for// the devices to complete their negotiation; otherwise, the devices may// not yet be synced. Depending on the initial PTP state of each camera,// it can take about 40 seconds for all devices to autonegotiate. Below,// we wait for the PTP status of each device until there is only one// 'Master' and the rest are all 'Slaves'. During the negotiation phase,// multiple devices may initially come up as Master so we will wait until// the ptp negotiation completes.std::cout << TAB1 << "Wait for devices to negotiate. This can take up to about 40s.\n";std::vector<GenICam::gcstring> serials;int i = 0;do{bool masterFound = false;bool restartSyncCheck = false;// check devicesfor (size_t j = 0; j < devices.size(); j++){Arena::IDevice* pDevice = devices.at(j);// get PTP statusGenICam::gcstring ptpStatus = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "PtpStatus");if (ptpStatus == "Master"){if (masterFound){// Multiple masters -- ptp negotiation is not completerestartSyncCheck = true;break;}masterFound = true;}else if (ptpStatus != "Slave"){// Uncalibrated state -- ptp negotiation is not completerestartSyncCheck = true;break;}}// A single master was found and all remaining cameras are slavesif (!restartSyncCheck && masterFound)break;std::this_thread::sleep_for(std::chrono::duration<int>(1));// for outputif (i % 10 == 0)std::cout << "\r" << ERASE_LINE << "\r" << TAB2 << std::flush;std::cout << "." << std::flush;i++;} while (true);// Start stream// When trigger mode is off and the acquisition mode is set to stream// continuously, starting the stream will have the camera begin acquiring// a steady stream of images. However, with trigger mode enabled, the// device will wait for the trigger before acquiring any.std::cout << TAB1 << "Start stream\n";//pDevice->StartStream();// start streamstd::cout << "\n"<< TAB1 << "Start stream\n";for (size_t i = 0; i < devices.size(); i++){devices.at(i)->StartStream();}uint64_t timestampNsPrevious = 0;// Get image// Once an image has been triggered, it can be retrieved. If no image has// been triggered, trying to retrieve an image will hang for the duration// of the timeout and then throw an exception.std::cout << TAB2 << "Get image";for (size_t i = 0; i < NUM_IMAGES; i++){for (size_t j = 0; j < devices.size(); j++){Arena::IDevice* pDevice = devices.at(j);GenICam::gcstring deviceSerialNumber = Arena::GetNodeValue<GenICam::gcstring>(pDevice->GetNodeMap(), "DeviceSerialNumber");std::cout << TAB2 << "Image " << i << " from device " << deviceSerialNumber << "\n";// Compare timestamps// Scheduling action commands amongst PTP synchronized devices results// in synchronized images with synchronized timestamps.std::cout << TAB3 << "Timestamp: ";Arena::IImage* pImage = pDevice->GetImage(30000);std::cout << pImage->GetTimestamp() << "\n";std::cout << "Grabbed FrameId " << pImage->GetFrameId() << std::endl;uint64_t timestampNs = pImage->GetTimestampNs();uint64_t difference = timestampNs - timestampNsPrevious;timestampNsPrevious = timestampNs;std::cout << " (" << "timestamp (ns): " << timestampNs << "; FrameRate : " << 1 / (difference*1E-9) << " FPS" << ")";// requeue bufferpDevice->RequeueBuffer(pImage);}}// stop streamstd::cout << TAB1 << "Stop stream\n";for (size_t i = 0; i < devices.size(); i++){devices.at(i)->StopStream();}}// =-=-=-=-=-=-=-=-=-// =- PREPARATION -=-// =- & CLEAN UP =-=-// =-=-=-=-=-=-=-=-=-int main(){// flag to track when an exception has been thrownbool exceptionThrown = false;std::cout << "Cpp_Trigger\n";try{// prepare exampleArena::ISystem* pSystem = Arena::OpenSystem();pSystem->UpdateDevices(1000);std::vector<Arena::DeviceInfo> deviceInfos = pSystem->GetDevices();if (deviceInfos.size() == 0){std::cout << "\nNo camera connected\nPress enter to complete\n";std::getchar();return 0;}//Arena::IDevice* pDevice = pSystem->CreateDevice(deviceInfos[0]);std::vector<Arena::IDevice*> devices;for (size_t i = 0; i < deviceInfos.size(); i++){if ((deviceInfos[i].SerialNumber() == "192800283") || (deviceInfos[i].SerialNumber() == "181700080") || (deviceInfos[i].SerialNumber() == "193300005") || (deviceInfos[i].SerialNumber() == "200600418")){devices.push_back(pSystem->CreateDevice(deviceInfos.at(i)));}}// run examplestd::cout << "Commence example\n\n";ConfigureTriggerAndAcquireImage(pSystem, devices);std::cout << "\nExample complete\n";// clean up examplefor (size_t i = 0; i < devices.size(); i++){pSystem->DestroyDevice(devices.at(i));}Arena::CloseSystem(pSystem);}catch (GenICam::GenericException& ge){std::cout << "\nGenICam exception thrown: " << ge.what() << "\n";exceptionThrown = true;}catch (std::exception& ex){std::cout << "Standard exception thrown: " << ex.what() << "\n";exceptionThrown = true;}catch (...){std::cout << "Unexpected exception thrown\n";exceptionThrown = true;}std::cout << "Press enter to complete\n";std::getchar();if (exceptionThrown)return -1;elsereturn 0;}